SARS-CoV-2 and COVID-19 has brought out both the best and worse in medical research. There are numerous examples that highlight this, such as the deplorable state of COVID-19 prediction models and the controversy around a quite well known meta-researcher. I won’t go into those topics. Instead, I will share two of my own experience that will illustrate both sides of the coin when it comes to going the extra mile by adopting some open sciences practices.

On the good side of things there is our effort of implementing an ordinal scale to measure the functional outcome after COVID-19. After our letter to the editor in which we only propose the idea, we kept the project going by publishing a manual to standardize efforts. On top of that, we were lucky that many researchers and clinicians were willing to contribute time and effort with translations and adaptations for their own patients. To this day, we have 20 translations, and many more in the making. Next to this, the scale is now included in several clinical guidelines and relevant clinical studies. This is a story of success – not for us, but for the open character of science in pandemic times. We had an idea, were able to share it quickly in a traditional journal with a letter to the editor, and afterwards supported by OSF to keep the project going.

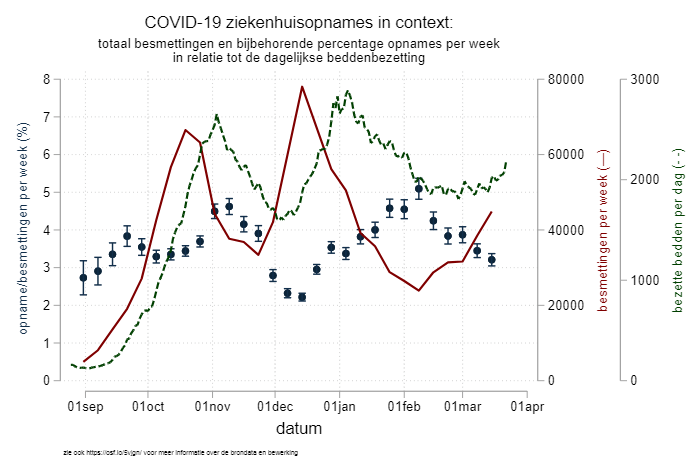

The bad side of things is the little call-to-action I wrote with my colleague DM. He observed that there was an interesting pattern in the percentage of patients that were admitted to the hospital with COVID-19 – with up to a twofold difference, as you can see in the graph above. The lower the weekly SARS-CoV-2 infection numbers, the higher the percentage of patients that were admitted in the hospitals. Our argument at the time was that, in preparation for the third wave of infections in the Netherlands, medical professionals (GP, ER docs, home nurses, etc) should try to keep the hospitals as empty as possible and keep the beds ready for the large numbers of patients that we knew were coming our way. Because it was more of an opinion piece, we skipped a detailed methods section, but we provided everything (code, data, graph, methods description) on a dedicated OSF page which we kept up-to-date. We offered our thoughts as a comment to the Dutch journal “huisarts en wetenschap”, who after a two-week review period decided to turn it down. Two thoughts on this: 1) I am not sure why there was peer review as it was a call-to-action and not original research and 2) the reason they gave us for the rejection (after revision!) is that the message was perhaps too complicated in these already confusing times – I will leave this without further comment. Next up was the journal NTVG, a more general medical journal in the Netherlands. The peer review process (again!) gave us another delay of two weeks, before the paper was accepted. But you might have guessed it – the third wave had already started and left our call-to-action redundant. In the end, we talked to the editor and mutually decided that we would withdraw our paper, and that at a later moment in time, we might actually write a research paper on the causes behind the variation in percentage of positive tested patients admitted to the hospital.

All in all a very dissatisfying experience, especially in comparison with the PCFS story. The weird thing here is that in both cases we did the same thing – we had an idea, wanted to share with those that might be triggered by it. We started with some information on OSF with dedicated project pages with their content growing overtime as the story developed. In both cases we were commended for using OSF to share additional material in support of the letter to the editor. Still, the outcome is very different. This can of course just be that the ideas are inherently different, but the delay in the publishing process not only killed the second project but also killed some of my enthusiasm for open science. Luckily, it is only some, and I hope / am sure that some future experiences will help me regain some of that.