My interests are broader than stroke, as you can see my tweets as well as my publications. I am interested in how the medical scientific enterprise works – and more importantly how it can be improved. The latest paper looks at both.

The paper, with the relatively boring title “Results dissemination from clinical trials conducted at German university medical centres was delayed and incomplete.” is a collaboration with QUEST, and carried by DS and his team. The short form of the title might just as well have been “RCT don’t get published, and even if they do it is often too late.”

Now, this is not a new finding, in the sense that older publications also showed high rates of non-publishing. Newer activities in this field, such as the trial trackers for the FDAA and the EU, confirm this idea. The cool thing about these newer trackers is that they rely on continuous data collection through bots that crawl all over the interwebs to look for new trials. This upside thas a couple of downsides though: with constant being updated, these trackers do not work that well as a benchmarking tool. Second, they might miss some obscure type of publication which might lead to underreporting of reporting. Third, to keep the trackers simple they tend to only use one definition as what counts as “timely publication” even though the field, nor the guidelines, are conclusive.

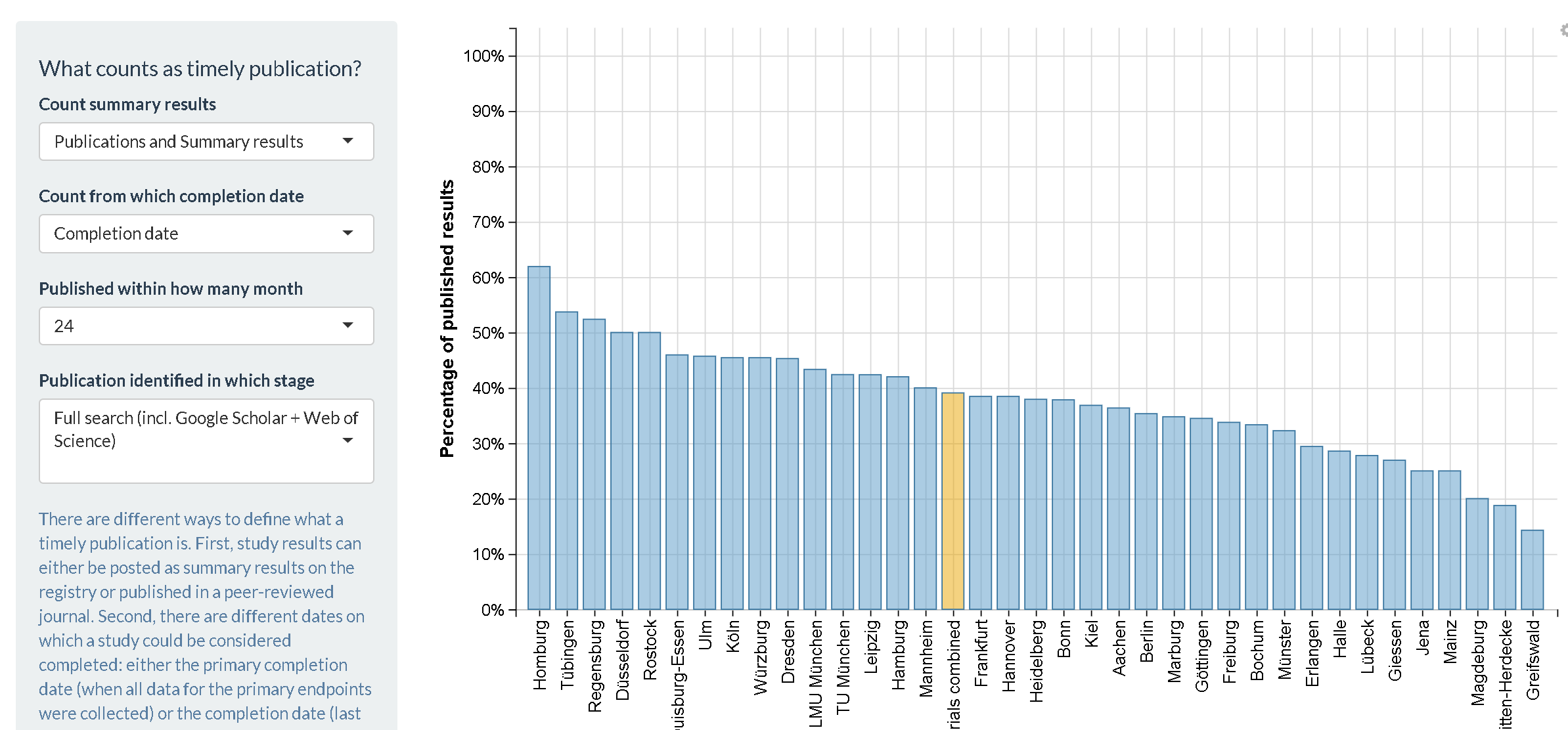

So our project is something different. To get a good benchmark, we looked at whether trials executed by/at German University medical centers were published in a timely fashion. We collected the data automatically as far as we could, but also did a complete double check by hand to ensure we didn’t skip publications (hint, we did, hand search is important, potentially because of the language thing). Then we put all the data in a database, made a shiny app so that readers themselves can decide what definitions and subsets they are interested in. The bottomline, on average only ~50% of trials get published within two years after their formal end. That is too little and too slow.

This is a cool publication because it provides a solid benchmark that truly captures the current state. Now, it is up to us, and the community to improve our reporting. We should track progress in the upcoming years by automated trackers, and in 5 years or so do the whole manual tracking once more. But that is not the only reason why it was so inspiring to work on the projects; it was the diverse team of researchers from many different groups that made the work fun to do. The discussions we had on the right methodology were complex and even led to an ancillary paper by DS and his group. But the way this publication was published in the most open way possible (open data, preprint, etc) was also a good experience.

The paper is here on Pubmed, the project page on OSF can be found here and the preprint is on bioRxiv, and let us not forget the shiny app where you can check out the results yourself. Kudos go out to DS and SW who really took the lead in this project.