We recently published one of our projects embedded within the PROSCIS study. This follow-up study that includes 600+ men and women with acute stroke forms the basis of many active projects in the team (1 published, many coming up).

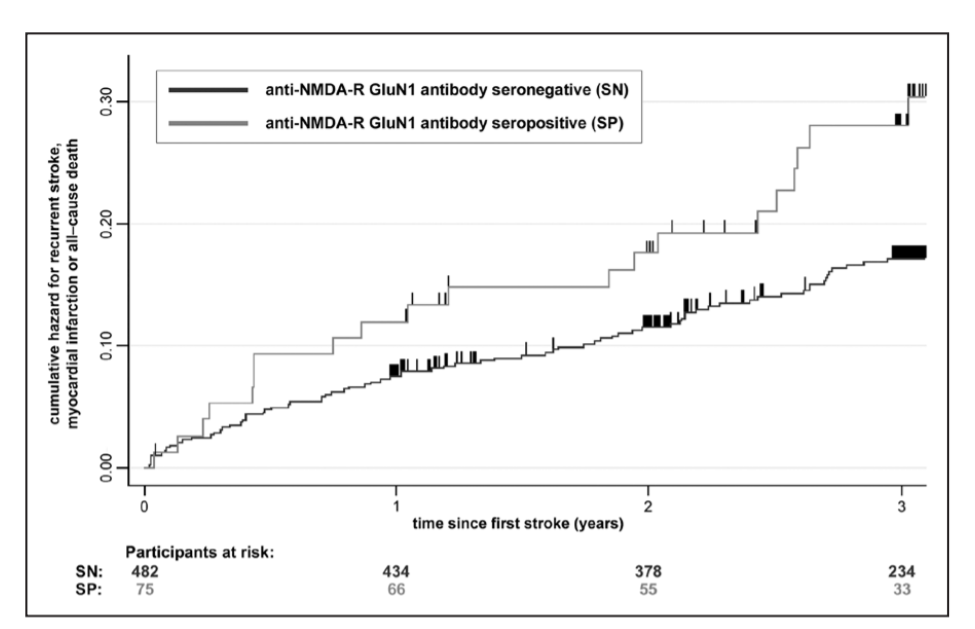

For this paper, PhD candidate PS measured auto-antibodies to the NMDAR receptor. Previous studies suggest that having these antibodies might be a marker, or even induce a kind of neuroprotective effect. That is not what we found: we showed that seropositive patients, especially those with the highest titers have a 3-3.5 fold increase in the risk of having a worse outcome, as well as almost 2-fold increased risk of CVD and death following the initial stroke.

Interesting findings, but some elements in our design do not allow us to draw very strong conclusions. One of them is the uncertainty of the seropositivity status of the patient over time. Are the antibodies actually induced over time? Are they transient? PS has come up with a solid plan to answer some of these questions, which includes measuring the antibodies at multiple time points just after stroke. Now, in PROSCIS we only have one blood sample, so we need to use biosamples from other studies that were designed with multiple blood draws. The team of AM was equally interested in the topic, so we teamed up. I am looking forward to follow-up on the questions that our own research brings up!

The effort was led by PS and most praise should go to her. The paper is published in Stroke, can be found online via pubmed, or via my Mendeley profile (doi: 10.1161/STROKEAHA.119.026100)

Update January 2020: There was a letter to the editor regarding our paper. We wrote a response.